Every investigator hopes the results they obtain support the hypothesis they put forth. However, more often than not, this does not happen – the data acquired do not conform to what was expected. What to do then with the “negative” results?

To be clear, the results we are talking about were obtained through carefully considered, well executed, and appropriately controlled (read: presence of positive and negative controls) experiments. They simply do not extend that which was predicted; in some cases, they may even call into question the underlying – often already published – results that led to the hypothesis guiding the study.

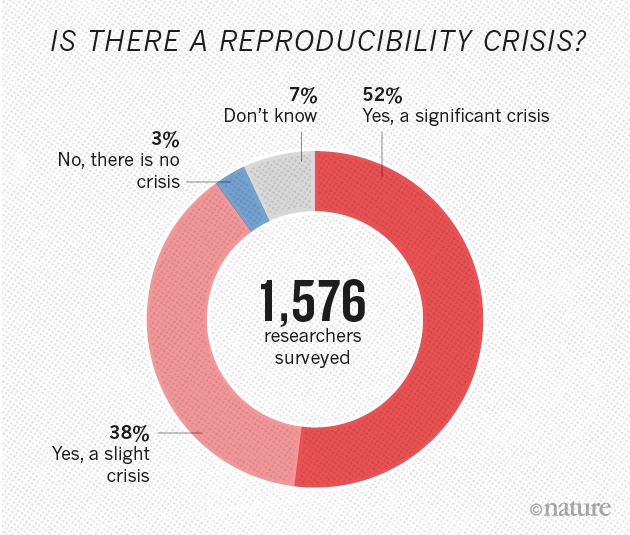

Of course, it may be that the work that was to be extended was never solid in the first place. That is, it is possible that the prevailing view in a field is based on incorrect and/or irreproducible results. Indeed, a number of studies are showing an alarmingly large percentage of high-profile published results are not reproducible [1],[2]. In the field of cancer – the pharmaceutical giants Amgen and Bayer Healthcare were unable to replicate the findings included in a large number of studies published in elite journals. The implications are profound; how can companies which rely on such research published in the scientific literature to define molecular targets and develop therapeutic drugs, do so against a backdrop of irreproducibility?

Why is this happening? Explanations offered include – among others, pressures to publish, financial considerations, poor statistical analyses, insufficiently detailed protocols/technical complexity, selective reporting, and inadequate reagent authentication. The National Institutes of Health is aware of these problems and is calling on investigators seeking support to include in their proposals detailed descriptions of i. the scientific premise of the proposal; ii. experimental design specifications; iii. how biological variability will be considered; and iv. how biological and chemical reagents will be authenticated[3]. This is in addition to newly required statements regarding evidence that a detailed plan for data analysis is in place.

Although the (negative) results obtained do not confirm or extend other studies, the point here is that they are still very much of value. Other scientists in the field would welcome knowing what was done, and what was observed. The obvious benefit is that it will prevent others from wasting time, energy, and resources on approaches that are not fruitful, and would help focus the field by better defining what are, and what are not reliable results/models.

Despite the inherent value of such results, there is the perception of publication bias – the belief that only positive data is worthy of publication. Non-confirmatory or negative results are often not disseminated, at great cost to the scientific community.

One approach to assuring sufficient promulgation of the conclusions of a study is for appropriate journals to agree, in advance, to publish the results regardless of whether they confirm or refute the underlying hypotheses that initiated the study. This would provide a mechanism for a field to enjoy a wealth of otherwise unreported information about what works, and what does not – in particular investigators’ laboratories. Many clinical trials operate this way, with final results disseminated regardless of the effects seen on patients. A second approach would be to develop journals that will consider negative, confirmatory, and non-confirmatory results, data notes, and virtually any valid scientific or technical finding in a particular field. F1000Research is a journal that adheres to just such guidelines. The idea that all results are embraced is extremely attractive; after all, science is, at its core, simply a search for the truth.

SRT – November 2018

[1] M. Baker, Nature (2016) doi: 10.1038/533452a. PMID: 27225100 (article)

[2] C.G. Begley and L.M. Ellis, Nature (2012) doi: 10.1038/483531a. PMID: 22460880 (article)

[3] National Institutes of Health – New Grant Guidelines; what you need to know. https://grants.nih.gov/reproducibility/documents/grant-guideline.pdf