Previously in Linguistic Anthropology for Fall 2017, my fellow students and I learned about the US Census and had David Kraiker, a Data Dissemination and GIS Specialist from the Census, talk to the class about what the organization does. As 2020 is fast-approaching, so does the new census to be given out to people residing in the United States. Every decade since it’s inception, the U.S. Census Bureau formulates a new questionnaire for people to answer. The purpose is to collect accurate demographic information and data that can be beneficial for policy making and record keeping. Data collected is publicly available and informs everything from the building of new schools to managing hospitals. As noted in recent news reports and blogs, they have also been used electorally to gerrymander districts. The important and daunting task of data collecting has a wide-reaching impact; what kinds of concerns are raised then when changes are made to the questions asked? A widely reported and controversial change is the addition of a question pertaining to participants’ citizenship status. The addition of the citizenship question for 2020 is now very likely as the Supreme Court is poised to allow the question into the survey.

Language

English: A Global Language

One of the concepts learned in Linguistic Anthropology Fall 2017 was the idea of a global language which is a language spoken by many people across the world as it holds a significant weight to it in government, education, or other social areas. Currently, the global language is English, more specifically, American English, with hundreds of millions of speakers. It’s not surprising as English is a common means of communication in business and scientific journals but how did it become a global language?

A mini history lesson needs to be said here as British English was the global language for a while. The phrase “The empire on which the sun never sets” was absolutely true given the colonial reach of the British Empire on every continent. Such a global presence and vast amount of resources meant that they were not only a military power but a social power too. Through their own policies they instituted mandatory teaching of English in some parts of the Empire. Since they were also a regional power, people were in a way coerced to learn the language of those who were dominating them.

Language Death & Dead Languages

In one of our textbooks for Linguistic Anthropology, Language in Society, the author Suzanne Romaine dedicates a part of chapter 2 in exploring the topic of language death. Language death occurs when a language ceases to be spoken and used by people, rendering it non-existent in terms of communication between others.

Language death is a scary concept as it can really happen to any language. What causes this to happen has been debate by linguists, from minority communities being suppressed and overridden by majority force in society, to a phenomenon called “language shift” where a community starts off as bilingual but gradually loses their native tongue.

Language of the Powerful

One of the most fascinating concepts learned in Linguistic Anthropology Fall 2017 is that of the language of the powerful and the powerless. Powerful language is characterized by being more active, assertive, and commanding while powerless language is more hesitating, unsure, and can be characterized by self-doubting. To give an example, a powerful statement would be “Let’s go to Chili’s this Tuesday” while a statement marked by powerlessness can be characterized as “Uh I guess I’m in the mood for Chili’s but I wouldn’t mind going somewhere else, what do you think?”. Notice the difference? The first sentence is more of a “I will” while the second is more doubtful but it also relates to the way it’s uttered. Tone is all too important, while going over the question part of the statement, did you imagine it being spoken in a higher tone with an unsure inflection? Those are points to be mindful of when detecting whether a person is speaking with a powerful or powerless speech.

Data & Excel

Data is fun! Excel is a friend with wonderful shortcuts! Those words have been rarely if ever uttered in the English language but it’s actually true in a way. As the merits and cons of using Excel has been reported before in the blog, I figured it is good to carry on that tradition. Working with self-reported data in this study is an experience that I can ever forget and I believe I can say the same for my fellow student researchers’. The data that we worked with provides insight into how people come into contact with various languages through their life experiences. It’s intimate in its own way as you really get to see and understand people’s lives and shared stories.

But then comes the transcribing and coding part of research which is an interesting ride on its own. You see, Excel, our primary mode of transferring the data on flashcards, is a very handy tool but we had to make sure that ALL the data was copied over. Read more

Limitless Language

One of the great advantages of being a part of this research is learning the amount of languages a person knows, understands, speaks, or just able to identify. You learn that your classmates are bilingual, trilingual, or even quadrilingual! The knowledge of being able to communicate in more than one language is a fascinating subject for linguists and was discussed heavily in our Anthropology class. Indeed, this whole research is based on delving into this area and obtaining more information about it.

People who are bilingual though, or others who know more than two languages, aren’t as uncommon as one expects, especially considering a person’s geographical location. The interesting part about gathering data from Seton Hall students is that the campus comprises a mixed ethnic/racial population with students coming from diverse backgrounds. Information on this shows a range of about 45%–50% of students identifying as belonging to non-white minority backgrounds! So to discover that the majority of data collected indicates that students are overwhelmingly versed in more than one language is astounding, especially given students understanding languages that aren’t as well-known as others, such as Uzbek as documented from one student.

Chomsky vs Skinner: A Battle for Language (Pt. 1)

The field of linguistics has had many different perspectives on the topic of language based on a time period’s available evidence. As it was taught in Linguistic Anthropology, this field went through many viewpoints, such as evolving from historical linguistics to descriptive linguistics.

Our knowledge of linguistics keeps evolving with time and accurate evidence. Nothing can be a more apt example of this then the debate over how language forms between two great scientists, B.F. Skinner and Noam Chomsky. To start off with, Skinner is more widely known in the field of Psychology as one of the pioneers of Behaviorism but as mentioned previously, he also theorized about language development. He spoke on how children learn language from the environment around them, mainly in a behaviorist framework. Basically, as a child learns new language skills, social influences will use reinforcement to help their learning move along, such as a child saying the word “book” and their teacher nods and rewards them for saying the right word and identifying the right object being focused on.

How Much Do You Know About Vowels?

Here’s an article discussing vowels in English as well as other languages:

8 Things You Might Not Know About Vowels

An extremely interesting point is number two, which discusses how the most common vowel sound in English doesn’t even have it’s own letter. Can you guess what it is?

Building our Site

When deciding what pages to include in our Menu, I had to really think about what pages are on regular websites. I decided that Our Mission Statement should be a our homepage so that when you arrive at our site, you know about our project and our goals. I revised the mission statement several times and finally decided upon the finished product you see now.

My second thought was having a page explaining what exactly we mean by Language Maps and Language Clouds. Dr. Quizon thankfully authored this page with working links.

As a team, we decided to rename the blog page to “The Project”. This was a unanimous decision. We wanted to take people step by step through our process.

Our “Contact Us” page is for anyone who has questions, comments, or wants to use our research which is covered by Creative Commons. The “Contribute” page will be an open forum for anyone who would like to add their languages to our research. We are working now with a WordPress expert who is going to build our questionnaire which will input directly into an Microsoft Excel spread sheet, already coded.

We encourage you to check back soon and contribute your own languages!

Using Viewshare

ViewShare is a website in which people can input a selection of data, like an excel chart, and the program will allow the person to create different charts, maps, lists and timelines, depending on what kind of information the program can read from the data. Professor Quizon set up the account and I, Ellie Hautz, explored it’s features with a mock Microsoft Excel spreadsheet to see how we could use ViewShare in our research. We all worked on coding the information onto the Microsoft Excel file. I took the final version and uploaded it to ViewShare to see what I could do with it. I was so excited to see the amount of charts I could make with it. I looked at the map portion and it had plotted points that I had not intended it to. For instance our version of New York was specifying New York, NY. However, the program read it as New York in the United Kingdom. So I thought that maybe putting in coordinates would plot easily. I then had a discussion with the group to decided what coordinates we were going to use. We decided that for everyone using North New Jersey English would be based on Bergen County and South New Jersey English would be Cape May County since they were the most coastal north and the most coastal south.The majority of our participants were from New Jersey. However, a good deal of participants indicated other states our countries. For plotting these, we decided to use the capital of the state or country origin of the language unless otherwise specified by the participant. So I made an extra section of my own Microsoft Excel spreadsheet with the coordinates for these areas, however, it still was not working properly. I looked closely and the program asked for the city and state and/or country of each data point. So I went through again and used the capital of every county, state, and country. Finally it worked and the map plotted correctly.

Unfortunately, after a week or so of the corrected data, ViewShare stopped being compatible with our first set of data.

Place names: New Jersey/New York to global speech communities

Our team member Ellie Hautz was tasked with figuring out how to map place names that were provided as regional descriptors for a language. In a sense, individuals were identifying where a particular speech community lives, either as a result of their own lived experience or inferred from information shared by others. The map that this query generates is quite distinct: using Viewshare we see a wider and richer distribution, encompassing both the perceived geographical origins of a language but also the location of speech communities as witnessed or inferred by our respondents.

One of the most interesting decisions made by the team was how to capture the high incidence of descriptors for New Jersey and New York varieties of English. Viewshare required specific latitude/longitude codes in order to generate a map. As a New Jersey-based university, our team felt obliged to step up to the richness of the local data before us. After exploring various strategies, we decided to adapt principles used in Rick Aschmann’s American Dialects website. Like Aschmann’s site as well as the broader literature on English dispersal in the US, we used the Eastern seaboard as starting point.  However, to graphically capture what our respondents refer to as “northern NJ,” we decided to map it onto New Jersey’s northernmost seaside county (Bergen) with the capital city of Hackensack. Similarly, verbatim descriptors of “southern NJ” were mapped onto the southernmost seaside county (Cape May) with the same-named capital city. Aschmann used slightly different terms, however, because he was plotting nationally beyond a single state. For purposes of this specific data set, what appears elsewhere as “Inland North” was coded as “Northern NJ”; what is referred to elsewhere as Atlantic Midland is what was coded as “Southern NJ.” Whenever New York was mentioned, the variety descriptor “Greater New York City” was used.

However, to graphically capture what our respondents refer to as “northern NJ,” we decided to map it onto New Jersey’s northernmost seaside county (Bergen) with the capital city of Hackensack. Similarly, verbatim descriptors of “southern NJ” were mapped onto the southernmost seaside county (Cape May) with the same-named capital city. Aschmann used slightly different terms, however, because he was plotting nationally beyond a single state. For purposes of this specific data set, what appears elsewhere as “Inland North” was coded as “Northern NJ”; what is referred to elsewhere as Atlantic Midland is what was coded as “Southern NJ.” Whenever New York was mentioned, the variety descriptor “Greater New York City” was used.

Interestingly, our college campus is located in Essex County, NJ located in a geographical region that falls somewhere at the cusp of the language varieties of Northern NJ/Inland North as well as Greater New York City.

How Do You Say…’Hello’?

In gathering our data, we have recorded many different languages. Here is how you say hello in some of them!

English ~ Hello/Hi

Italian ~ Ciao

German ~ Hallo

French ~ Bonjour

Tagalog ~ Kamusta

Spanish ~ Hola

Russian ~ Здравствуй (Zdravstvuy)

Welch ~ Helo

Dutch ~ Hoi

Japanese ~ こんにちは (Kon’nichiwa)

Korean ~안녕 (annyeong)

Polish ~ cześć

Gaelic ~ Haigh

Portuguese ~ Oi

Chinese (Cantonese/Mandarin) ~ 你好 Nǐ hǎo

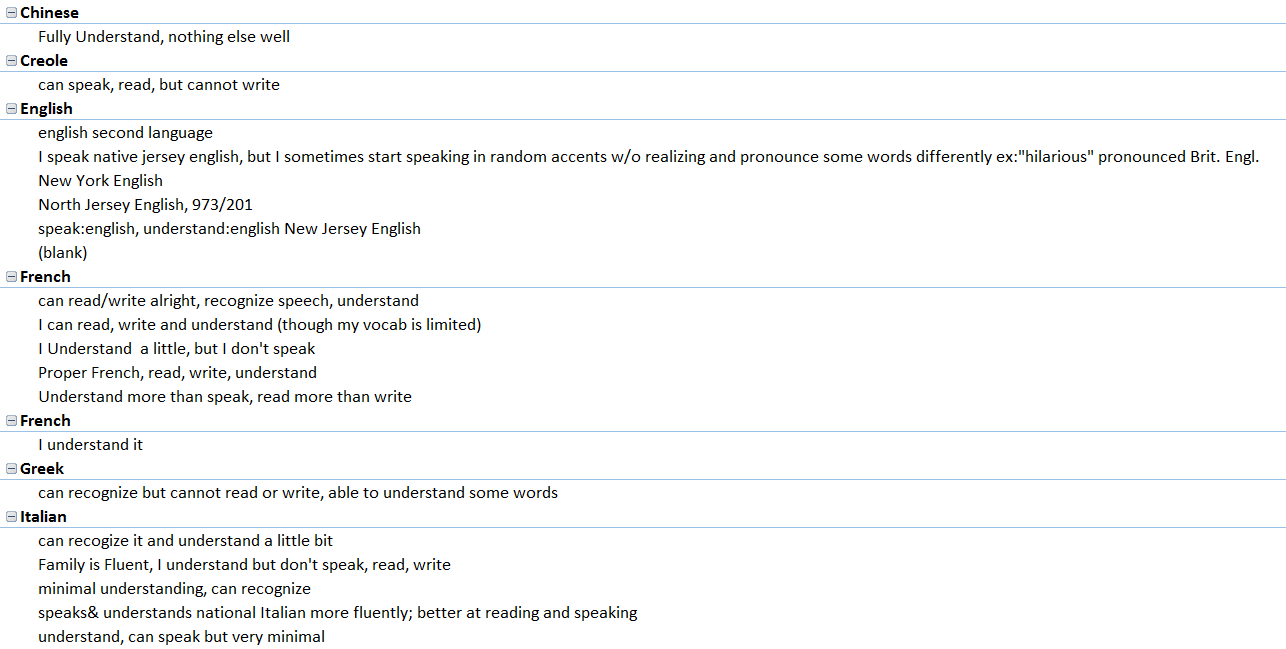

The Coding Process

As of July, our research interns officially began coding the data extracted from the note cards.

The first step was moving all the raw data information from the individual note cards to an Excel spreadsheet. Once we finished transcribing the data verbatim from the cards, we noticed that the individual descriptors on each card would make coding the spreadsheet difficult. What undoubtedly made the cards unique, also made them so versatile that coming up with a coding system would be an ambitious task. We wanted to keep the authenticity of the raw data while also coding the entries in an easily understood manner, making it significantly easier for us to plot.

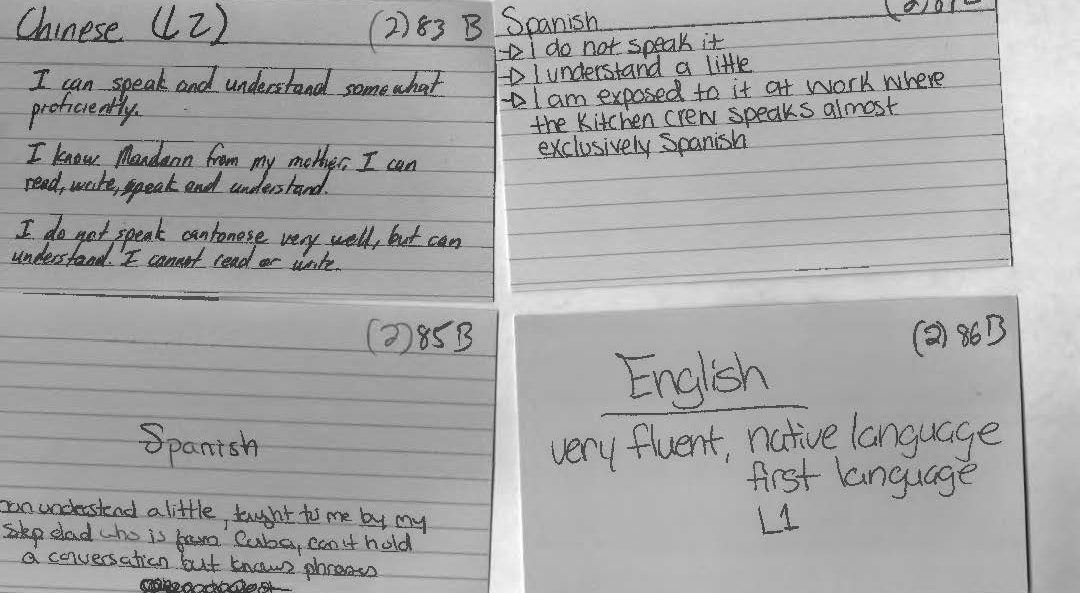

[box] Here are the unique descriptors that students wrote on their note cards. [/box]

Before establishing the coding system, we had to answer some questions: If the language is Spanish, but the card identifies The Dominican Republic or Puerto Rico, do we code that region as Spain or as the other two countries? What can we assume from the cards if we can assume anything? Since each research intern takes part in all steps of the process, establishing a concise coding system is essential so that every card is coded the same way.

Generally, the beginning of our research meetings are spent discussing any coding problems that come up. We are currently still coding the First Data Set and have started coding the Second Data Set.

The Origin of the Project

In the Fall 2015 Linguistic Anthropology class taught by Dr. Quizon, students were asked to share information about any and all languages that they knew. She gave out note cards and instructed the class to write down one language per card. Underneath the name of the language, they were asked to write down anything they wished to say about this language. They used descriptors of their own design making these cards rich with open-ended qualitative data. On the reverse of each card, they were asked to write their names.

With support from Seton Hall’s Digital Humanities Fellowship initiative, Dr. Quizon and three student interns who completed the course in the previous semester took a closer look at this data and explored ways to visualize the information. Were there intriguing or interactive ways to plot linguistic information? Could the data be mapped? Were there patterns to be discovered when expressed in visual form?

The class of 35 students was surveyed twice: once in the beginning of the semester, and again towards the end of the semester. The Language Maps, Language Clouds research team took these two sets of note cards, devised ways to capture, organize and analyze the information using linguistic concepts, explored ways to visualize the results of our queries, and aimed to share our findings online. Our goal is to share both processes and results as we seek to deepen our understanding of the data an interesting, interactive setting.

Even though we all participated in every aspect of the project, we each had an area of expertise. Ellie learned how to use and troubleshoot Viewshare and later, with Dr. Quizon, explored Tableau. She worked with Anastasia who was in charge of Excel and added knowledge of its features as needed for the project. I was in charge of learning how to build a blog on WordPress.