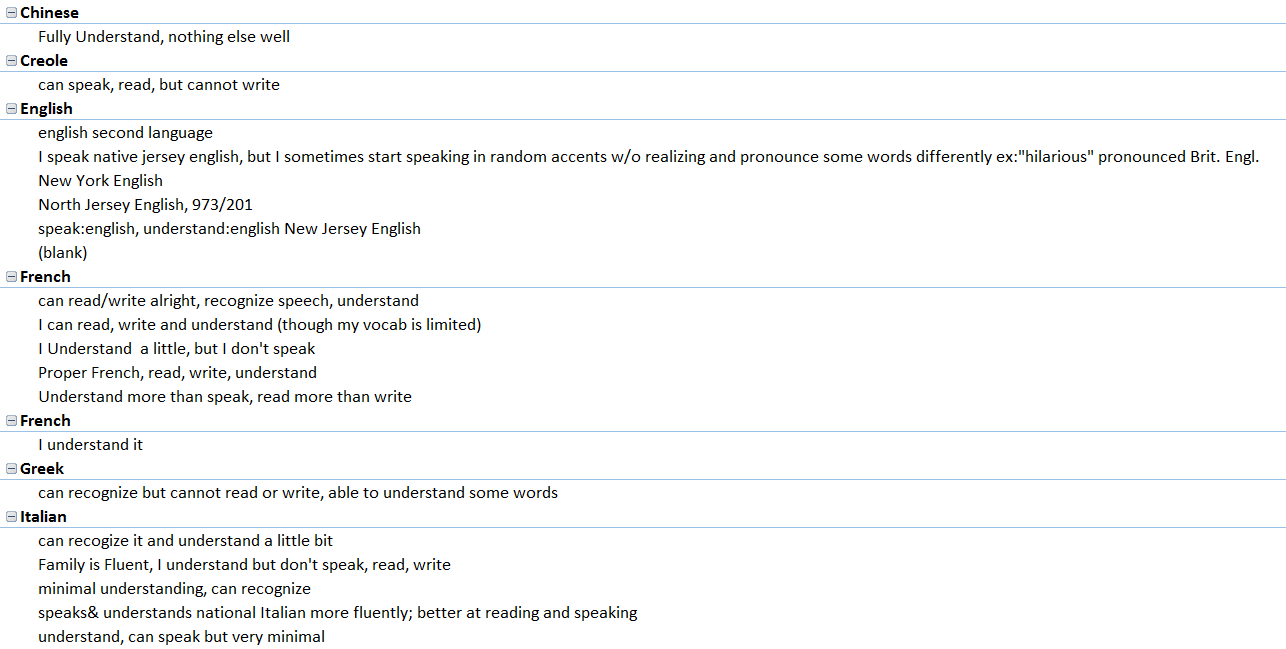

In the Fall 2015 Linguistic Anthropology class taught by Dr. Quizon, students were asked to share information about any and all languages that they knew. She gave out note cards and instructed the class to write down one language per card. Underneath the name of the language, they were asked to write down anything they wished to say about this language. They used descriptors of their own design making these cards rich with open-ended qualitative data. On the reverse of each card, they were asked to write their names.

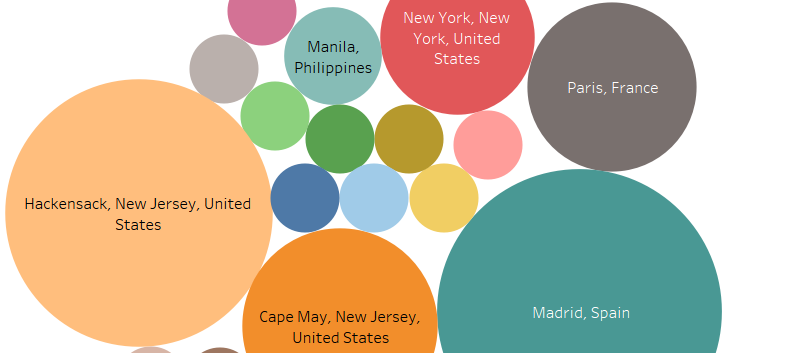

With support from Seton Hall’s Digital Humanities Fellowship initiative, Dr. Quizon and three student interns who completed the course in the previous semester took a closer look at this data and explored ways to visualize the information. Were there intriguing or interactive ways to plot linguistic information? Could the data be mapped? Were there patterns to be discovered when expressed in visual form?

The class of 35 students was surveyed twice: once in the beginning of the semester, and again towards the end of the semester. The Language Maps, Language Clouds research team took these two sets of note cards, devised ways to capture, organize and analyze the information using linguistic concepts, explored ways to visualize the results of our queries, and aimed to share our findings online. Our goal is to share both processes and results as we seek to deepen our understanding of the data an interesting, interactive setting.

Even though we all participated in every aspect of the project, we each had an area of expertise. Ellie learned how to use and troubleshoot Viewshare and later, with Dr. Quizon, explored Tableau. She worked with Anastasia who was in charge of Excel and added knowledge of its features as needed for the project. I was in charge of learning how to build a blog on WordPress.