Could Facial Recognition Be Perpetuating Society’s Biases?

Karina Agarwal

Trending Writer

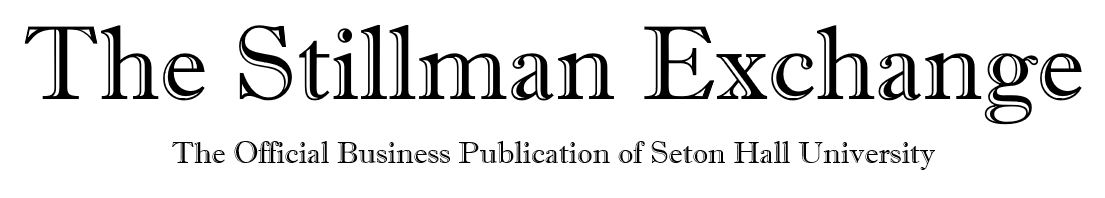

Research has found that certain facial-recognition software perpetuates society’s existing inequities based on race, class, and gender. Joy Buolamwini, an M.I.T. Media Lab researcher, discovered that the facial-recognition algorithm she was working on could not detect her face – until she put on a white mask. She soon realized that many artificial-intelligence programs are trained to identify patterns using data sets that skew light-skinned and male results. Buolamwini stated, “When you think of A.I, it’s forward-looking, but A.I. is based on data, and data is a reflection of our history.”

Notably, Shalini Kantayya decided to conduct a documentary based on this information called “Coded Bias,” where she explored how machine-learning algorithms can extend the biases rampant in our society. Many of these algorithms are commonly used in advertising, hiring, financial services, and policing. Tech companies tend to market facial recognition software as a convenience that can be employed to identify individuals in photos or utilized in place of a password to unlock smartphones or computers. This technology permeates our daily lives.

In fact, the New York Times reported Detroit Police officers mistakenly arrested Michigan resident Robert Julian-Borchak Williams for shoplifting earlier this year. The arrest was made based on flawed police work that relied on a faulty facial recognition match. However, some law enforcement officials assert that this technology is critical in detecting crime and providing justice.

Interestingly, James Tate, a Detroit City Council member, was forced to determine whether or not the Police Department should continue using facial recognition software. A heated debate over the topic ensued back in September. Tate asserted that he believed that this software, with appropriate guidelines, could prove as a practical tool along with the many other measures enacted by law enforcement. In response to Mr. Williams’ wrongful arrest, Tate stated, “It’s a terrible thing what happened to Mr. Williams.” He continued to say, “What I don’t want to do is hamper any effort to get justice for people who have lost loved ones. I’ve lived in Detroit my entire life and seen crime to be an issue my entire life.”

Of note, the National Institute of Standards and Technology reported that many facial recognition systems falsely identified African-American and Asian faces ten times to one hundred times more than Caucasian faces. Furthermore, the highest error rates were observed when recognizing Native Americans within a database of photos used by law enforcement agencies in the United States. Moreover, facial recognition algorithms struggled when identifying women compared to men. In addition, the software falsely detected older adults up to ten times more than middle-aged adults.

A federal report shared similar results. It was one of the most robust studies of its kind. The researchers involved in this study had access to over 18 million photos. These images comprised of approximately 8.5 million individuals from the United States through various items such as mug shots and visa applications.

Civil liberties experts have warned that this technology, which can track people at a distance without their knowledge, has the potential to lead to ubiquitous surveillance. This could result in a lack of freedom of movement and speech. Jay Stanley, a policy analyst at the American Civil Liberties Union, expressed in a statement, “One false match can lead to missed flights, lengthy interrogations, watch list placements, tense police encounters, false arrests or worse.” He continued to mention that “government agencies, including the F.B.I, Customs and Border Protection, and local law enforcement must immediately halt the deployment of this dystopian technology.”

As the prevalence and use of facial recognition technology increases, companies and governments must safeguard the privacy and rights of individuals. Questions ranging from when, where, and how these systems are deployed must be taken into consideration. Ethics must be at the center of these decisions.

Contact Karina at agarwaka@shu.edu