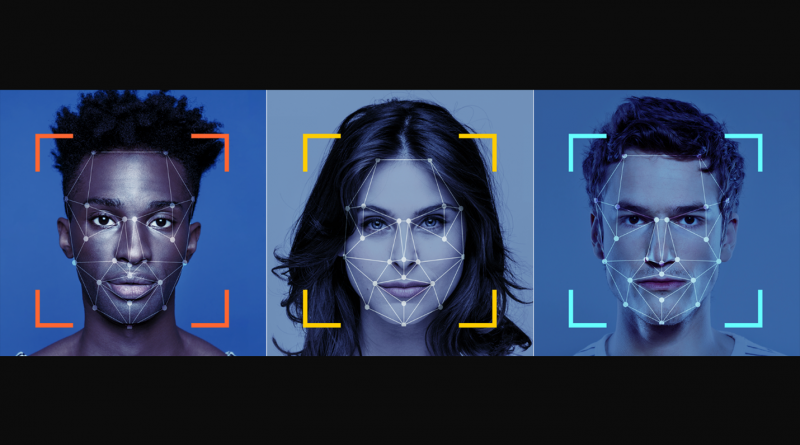

Could Facial Recognition Be Perpetuating Society’s Biases?

Research has found that certain facial-recognition software perpetuates society’s existing inequities based on race, class, and gender. Joy Buolamwini, an M.I.T. Media Lab researcher, discovered that the facial-recognition algorithm she was working on could not detect her face – until she put on a white mask. She soon realized that many artificial-intelligence programs are trained to identify patterns using data sets that skew light-skinned and male results. Buolamwini stated, “When you think of A.I, it’s forward-looking, but A.I. is based on data, and data is a reflection of our history.”

Read More